"One-third of adults still sleep with a comfort object."

"The global rate for washing hands after using the toilet is under 20 percent."

This information comes from the results of surveys.

A survey is a method of gathering information from a sample of people, traditionally with the intention of generalizing the results to a larger population.

But is the information reliable?

To know whether we can trust survey data, we need to be aware of any biases.

Photo by Lukas Blazek on Unsplash

Photo by Lukas Blazek on UnsplashSurvey Terminology

Bias

A survey's measurement process might overestimate or underestimate the value of a population's survey responses.

Sample

Sample

It's usually not practical for researchers to analyze a whole population.

A sample is a smaller set of results that the researchers use to represent a larger population.

Validity

Validity

The survey results should relate to and support the researchers' conclusion.

A valid survey measures what it's supposed to measure.

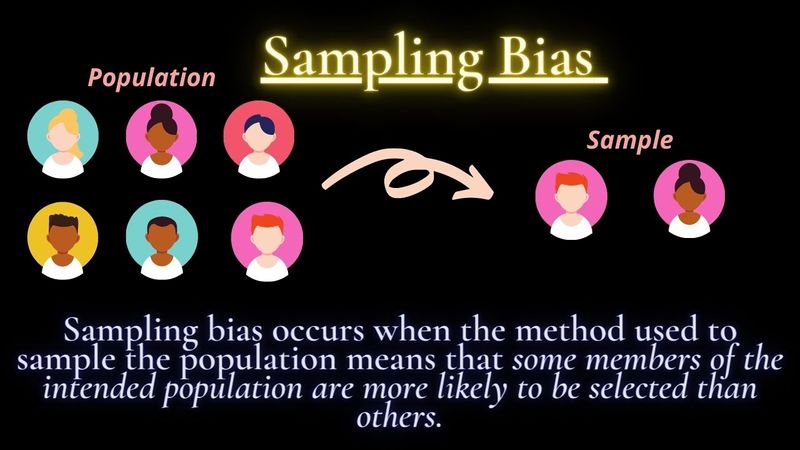

1. Sampling Bias

Ideally, the sample should be random and follow the criteria of the survey. If the sample is not random, the survey is less valid.

Example: The Truman-Dewey Presidential Race

In 1948, President Harry Truman ran against Thomas Dewey in the US Presidential election.

Nationwide telephone surveys predicted that Dewey would defeat Truman in a landslide, but Truman won.

What went wrong with the survey?

Wealthy people, who mostly supported Dewey, were more likely to have phones in their homes

Lower to middle-class families, who mostly preferred Truman were less likely to have phones at home to answer the survey

The survey was inaccurate because it was biased towards Dewey voters

2. Non-Response Bias

Non-response bias is when there is a significant difference between those who responded to your survey than those that chose not to.

People might not respond to a survey because:

the survey asks for sensitive information that the person doesn't want to answer

the target audience doesn't relate to the survey questions

the person's email account sends the survey invite to a SPAM folder

Example: Sensitive Information

A survey asking young adults about their adult video watching habits may lead to a non-response bias because it's a very personal topic and respondents do not wish to share this information about themselves.

Quiz

Malina is conducting a survey and trying to avoid non-response bias. Which of the following strategies should she avoid?

Subscribe for more quick bites of learning delivered to your inbox.

Unsubscribe anytime. No spam. 🙂

3. Response Bias

Response bias causes survey participants to answer inaccurately, which skews data and makes meaningful analysis difficult.

People might answer a survey inaccurately because:

Leading questions with suggestive language push them to answer a certain way.

They're embarrassed to give a socially unacceptable answer.

They're asked to give "all or nothing" responses — a hard yes or no that doesn't account for their mixed feelings.

They're overeager to support the survey's conclusion.

Example: Patient Satisfaction

In this NCBI study of patient satisfaction with their primary physician:

Patients who had an overall higher satisfaction score were more likely to participate in satisfaction surveys compared to patients who had an overall low satisfaction score.

Physicians were overestimating their patients' satisfaction and healthcare providers were not able to see the big picture.

Quiz

What phrase from this government education survey question leads to response bias: "Do you think our world-leading education system needs improvement, or are you satisfied with the high-quality education your child receives?"

4. Question Order Bias

The order in which questions are asked can influence how respondents answer the rest of the survey.

Example: List of Qualities

A 1984 survey asked participants to choose the top 3 qualities a child should have from a list.

66% chose "honest" when it was higher on the list.

Only 48% chose "honest" when it was lower on the list.

5. Answer Order Bias

Answer order bias occurs when the order of your answer options influences the respondent to select a particular answer or a combination of answers.

Example: Pet Peeves

SurveyMonkey asked 400 respondents to identify their biggest workplace pet peeves.

Half of the participants were given a randomized list of pet peeves to choose from, while the other half's list had a predetermined order.

"Rude coworkers" was a popular response with both groups, but the participants in the group with the predetermined list were more likely to choose an answer at the top of the list.

Take Action

Your feedback matters to us.

This Byte helped me better understand the topic.